T-Shirts vs Tailored Suits: Procurement Differences Between AI Hardware & ASICs

What Bitcoin miners need to understand before they commit to AI infrastructure — and which instincts will mislead them.

Miners thinking about moving into AI infrastructure often describe the experience the same way: "It feels like a completely different business." And it is—not just because the compute is harder to manage (because it is), but also because the way you procure, deploy, and operate that compute is fundamentally different.

The gap isn't technical. It's conceptual. And understanding it before you commit to a transition can save you months of frustration and millions in misallocated capital.

The Core Difference: Standardization vs Customization

Let's go back to first principles. When you buy an ASIC for Bitcoin mining, you're buying a product. The S21 comes to you fully specified, fully tested, and ready to hash. Every unit is identical. You order quantities, they arrive on a schedule, you rack them, and they work. You're not designing anything. You're not configuring anything beyond pointing the miner at a pool. It's standardized, it's fast, and it's predictable.

When you build GPU infrastructure for AI workloads, you're buying components, not a product. You're buying a pre-configured server, but you also need specific storage and networking components for your specific configuration. Nothing is off-the-shelf. Everything is custom.

That's the difference between a t-shirt and a tailored suit.

T-Shirts (ASICs): Pick a Size, Get Dressed

ASICs work because they're standardized commodities. You decide on a model (S21, M53, etc.), and you're selecting from a range that's already defined by manufacturers. You pick your quantity, your delivery timeline, and you're done. Every S21 is the same. Every unit performs to specification. Every unit has the same power consumption, cooling requirements, and footprint.

This is exactly how t-shirt sizing works. You know what an XL means because the industry standardized it. You can order XL t-shirts from a hundred different brands and they'll be roughly equivalent. They fit the same way. You can wear them to work, to a concert, to the gym. They're versatile and predictable.

Mining scaled because ASIC procurement scaled. You grew from 10 units to 100 to 10,000 by just changing the quantity. The complexity didn't increase. The timeline didn't balloon. You had repeatable processes because every unit was identical.

Tailored Suits (GPU Infrastructure): Reference Architectures Are Like Suit Styles—Dependent on Their Use

GPU infrastructure does have reference architectures. NVIDIA's DGX BasePOD is the canonical example. These are pre-validated designs that give you a foundation. But here's the catch: a reference architecture is a blueprint, not a plug-and-play product.

When you order a GPU server, you're not just picking a model and quantity like you do with ASICs. Even starting from a reference architecture, you're deciding storage capacity, CPU count, RAM configuration, NIC throughput, power delivery, cooling architecture, and interconnect topology. Each decision affects the others. Reference architectures compress some of that decision-making—they say "here's what works"—but they don't eliminate it.

This is still what a tailored suit is. You don't just pick a size. You start with a template that someone who understands how to build for you has created, but you still have to decide how it gets configured for your specific use and measurements (or, for GPU infra, workload and site). It takes time. It's expensive. And even with a reference architecture as your foundation, the consequences of misconfiguration are immediate and costly.

A beautifully tailored tuxedo is a masterpiece…until you wear it to a casual dinner. It’s got everything you should need: pants, a white button-down, a tie, and a jacket. But it’s the wrong occasion. Configure your GPU cluster for cross-server training bandwidth when you need low-latency inference to end users, and you have the same problem: excellent architecture, wrong workload. You can't just swap out the lapels. You have to rebuild.

The industry uses the term SCORN to describe GPU server spec sheets: Storage, CPUs, Operating System, RAM, NICs. Every variable matters. Every variable constrains your workload options. A reference architecture handles some of that complexity upfront, but you still have to make configuration decisions that don't exist in ASIC procurement. There are no t-shirt sizes. This isn't hypothetical: at GTC 2026, infrastructure leaders discussed how GPU procurement complexity cascades into coordination challenges that derail transitions more often than any single technical problem.

Why This Matters in Practice

This distinction sounds academic until you're three months into procurement and realize that everything is interdependent.

Your colocation facility has space available, but the fiber connectivity won't be lit for another six weeks. Your GPU shipment just arrived, but RAM prices spiked and now your planned server configuration is 40% more expensive. Your hardware is deployed but your network architecture doesn't support the bandwidth your workload actually requires. You're operating at half the throughput you expected, and redesigning the network means pulling servers offline and reconfiguring.

With ASICs, if your power delivery was wrong, you add more circuits or upgrade breakers. The hardware doesn't change. With GPU infrastructure, wrong assumptions about networking, storage, or compute-to-memory ratios often mean redoing the entire build.

And this only gets more complex as you scale. Training clusters need high-bandwidth interconnects between servers. Inference workloads need low-latency connections to end users. A mining facility 500 miles from the nearest city works fine for training. It doesn't work for inference. Your site characteristics matter in ways they never did for mining. Not because mining is simple, but because ASIC workloads don't care about latency to external systems the way AI workloads do.

The physical coordination alone is brutal. Colocation lead times run around nine months. Procurement timelines stretch six to eighteen months under ideal conditions. You're not just managing procurement—you're orchestrating hardware, facility buildout, and fiber connectivity as a single dependent system. Miss one and the whole timeline slips.

The Operational Complexity Extends Beyond Procurement

Once hardware is deployed, the operational differences compound.

ASIC mining is relatively static. You plug in, point at a pool, monitor hashrate, and if a unit fails, you replace the hashboard or the unit. GPU infrastructure requires ongoing OS management, driver updates, firmware revisions, and compatibility monitoring across every component in the stack. When a GPU server underperforms, diagnosis isn't straightforward. It could be driver related, thermal throttling, network saturation, or misconfigured software. You need people who can diagnose complex systems, not just replace hardware.

Monetization is different too. Mining is automatic. Hashrate flows to a pool, BTC flows back. GPU monetization requires a go-to-market strategy. Are you renting capacity to hyperscalers? Running a neocloud? Building your own AI applications? Each path has different sales cycles, SLAs, and margins. You're running a business with customers, not just operating infrastructure. That distinction matters because customers have expectations. They measure uptime. They measure latency. When things go wrong, they don't give you a grace period the way a mining pool does.

What This Means for Miners

If you've operated ASICs successfully, don't assume that experience directly transfers to GPU infrastructure. Some of it does. Power management at scale, site operations, understanding high-density compute environments. Those skills are valuable. Your existing power assets are genuinely gold. At GTC, energy leaders underscored that miners already have the hardest piece of the infrastructure puzzle solved: megawatt-scale power assets took years to secure and are exactly what AI/HPC facilities need.

But mining instincts can also mislead you, and they often do. Miners are used to fast deployment—weeks, not quarters. Miners are accustomed to standardized infrastructure with linear scaling. Miners often operate with minimal compliance overhead. And miners rarely deal with export controls on equipment. GPU procurement has regulatory constraints ASIC procurement doesn't.

None of those things are true in GPU infrastructure.

The transition works when you approach it like what it is: building custom infrastructure for bespoke workloads, not deploying standardized equipment at scale. It requires patience, different people, and a fundamentally different mindset about what "done" looks like.

The Bottom Line

ASICs work because they're commodities. You can replicate the exact same unit thousands of times. GPU infrastructure works when you understand that you're building something specific for a specific purpose. It takes longer. It costs more. It requires different skills. But it's worth it because the market opportunity is real, and miners do have genuine advantages in the pieces that matter most.

The catch? You have to know which instincts to keep and which ones will sink you.

Don't assume you're buying t-shirts when you're actually commissioning tailored suits. And don't assume your mining expertise automatically translates to data center operations. Evaluate your site against what the workload actually needs, not what the pitch deck says. Because the window for conversion advantage is compressing as purpose-built AI facilities come online.

The best time to make that evaluation was six months ago. The second best time is now.

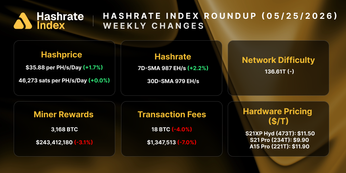

Hashrate Index Newsletter

Join the newsletter to receive the latest updates in your inbox.