The AI ASIC Market, Part 2: How Broadcom and Marvell Quietly Power Hyperscaler AI

Two companies enable 80% of hyperscaler custom AI silicon. Here's how they got there and where they're headed.

In Part 1 of this series, we mapped the five hyperscaler AI ASIC programs — Google TPU, AWS Trainium, Microsoft Maia, Meta MTIA, and OpenAI's pre-production custom chip — and flagged a supply-chain reality most coverage glosses over: most of those programs are co-designed with one of two outside companies. Broadcom designs Google's TPU and OpenAI's custom chip. Marvell designs AWS Trainium and Microsoft Maia. The hyperscaler "in-house silicon" race is, structurally, a Broadcom and Marvell duopoly with five logos on top.

Part 2 zooms in on those two design partners. Broadcom and Marvell occupy the same strategic category but at very different scales — and their economics, customer dynamics, and risk profiles look almost nothing like NVIDIA's or like the standalone AI chip companies we'll cover in Parts 3 and 4. Understanding how design partners actually make money is the foundation for evaluating the rest of the independent AI chip landscape.

TLDR

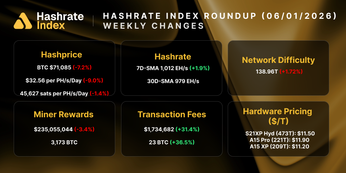

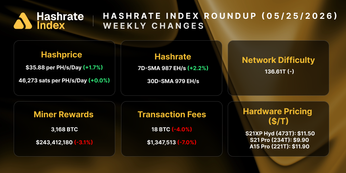

- Two companies — Broadcom and Marvell — enable more than 80% of hyperscaler custom AI silicon. Broadcom holds roughly 70% of the design services market; Marvell anchors the structural #2 position.

- Broadcom's gross margins (~78.6%) exceed NVIDIA's (~73.5%), and a $73 billion committed customer backlog gives it multi-year revenue visibility most semiconductor companies don't have.

- The design services model is structurally capital-light. Hyperscaler customers absorb capex and manufacturing risk; design partners capture IP and licensing economics.

- The gating variable for both companies is customer concentration. Alphabet is Broadcom's largest AI customer; AWS and Microsoft anchor Marvell. Any meaningful in-house design move by these customers compresses the duopoly's economics.

The Two Types of Independent AI Chip Companies

The independent AI chip landscape divides into two groups with fundamentally different business models. The distinction matters because applying the same mental model to both produces wrong conclusions.

Design enablers — Broadcom and Marvell — don't sell AI chips under their own brand for data center deployment. They provide custom ASIC design services, intellectual property, and networking silicon that hyperscalers use to build their chips. When Google ships a TPU, Broadcom captured economics on the design services and networking IP that went into it; Google captured the chip itself. The design enabler's competitive dynamic is with the other design enabler, not with NVIDIA or with the hyperscalers they serve. The business is capital-light and hyperscale-anchored — a platform model rather than a product model.

Direct competitors — Groq, Cerebras, Etched, Tenstorrent, and Tensordyne — build and sell their own AI chips. They compete head-on with NVIDIA's GPUs and, in some cases, with hyperscaler in-house silicon. Each has a distinctive architectural thesis. The business model is closer to traditional semiconductor startups: significant capital requirements, long development cycles, execution risk on silicon validation, customer acquisition challenges. Valuations swing wildly based on technical proof points and anchor customer wins.

A buyer evaluating Broadcom needs a different mental model than one evaluating Tensordyne, even though both fall under the "independent AI chip companies" label. The categories share branding but almost nothing else — different revenue scales, different competitive positions, different risk profiles, different growth math. The rest of this article covers the design enablers; Parts 3 and 4 cover the direct competitors.

Broadcom: The $100 Billion Design Partner

Broadcom is the dominant AI ASIC design enabler by every measurable axis. Current market share estimates place Broadcom at roughly 70% of the custom AI accelerator design services market, up from the 60–80% range Bloomberg Intelligence flagged earlier in 2026. The concentration is increasing as customer wins compound. Confirmed XPU customers include Google, Meta, OpenAI, Anthropic, and — new in 2026 — Apple.

The financial trajectory shows the acceleration that CEO Hock Tan has been guiding toward for years. Broadcom's AI semiconductor segment has roughly doubled year-over-year for four consecutive quarters, with the most recent guidance pointing to even sharper sequential growth into Q2 FY2026:

| Period | AI Semi Revenue | YoY Growth | Total Revenue | Adj. EBITDA Margin |

| Q1 FY2025 | $4.1B | +77% | $14.9B | ~68% |

| Q4 FY2025 | $6.5B | +74% | $18.0B | ~68% |

| Q1 FY2026 | $8.4B | +106% | $19.3B | 68% |

| Q2 FY2026 (guidance) | $10.7B | +140% | $22.0B | 68% |

The table tells the story prose can't tell as cleanly. The +140% YoY growth in the Q2 FY2026 guidance isn't a one-time spike — it's the third consecutive quarter of accelerating growth on top of an already-large base. The implied annual AI revenue run rate by the end of FY2026 approaches $50 billion. Hock Tan's $100 billion FY2027 AI chip revenue target is backed by a $73 billion committed customer backlog, which gives this trajectory the kind of multi-year visibility that semiconductor companies almost never have.

The Google supply agreement structurally locks in a meaningful portion of that backlog. In April 2026, Broadcom and Google formalized a long-term agreement through 2031 for Broadcom to develop and supply custom TPUs across Google's future generations. Five-year supply commitments are typical in aerospace and defense, not commercial semiconductors. A single hyperscaler customer has committed to Broadcom through the end of the decade.

The design services model is structurally capital-light. Hyperscaler customers own the chip design and absorb manufacturing capex; Broadcom provides IP, networking technology, interconnect fabric, and design expertise. The result is gross margins of 78.6% — meaningfully higher than NVIDIA's ~73.5% — paired with capex that's a fraction of what NVIDIA, Intel, or AMD spend. Broadcom captures the economics of the AI infrastructure build-out without the balance sheet intensity of becoming a chip manufacturer.

The risk is customer concentration. Alphabet is Broadcom's largest AI customer by a meaningful margin. Any move by Google toward fully in-house design services capability would reduce Broadcom's exposure. The 2031 supply agreement is the structural response to this risk — locking in a five-year commitment — but it doesn't eliminate the fact that a significant portion of Broadcom's $100 billion FY2027 target rests on a single customer. The Apple and Anthropic customer additions mitigate this, but don't fully resolve it.

Marvell Technology: The Secondary Design Enabler

Marvell occupies the same strategic category as Broadcom — custom AI ASIC design services and AI networking chips for hyperscaler customers — but at meaningfully smaller scale. Marvell commands 20–25% of the custom AI ASIC design services market per Bloomberg Intelligence, primarily anchored by AWS Trainium and Microsoft Maia design wins.

This is a structurally interesting position. Both of Marvell's primary hyperscaler customers are in catch-up mode relative to Google TPU — which is Broadcom's. The hyperscaler ASIC design services market is effectively divided: Broadcom enables the leader, Marvell enables the chasers. Competitive pressure flared up when Broadcom secured an exclusive OpenAI networking deal, with analysts flagging near-term pressure on Marvell's networking business.

Marvell's own Q4 FY2026 earnings filing acknowledged the strategic pressure directly, flagging risk factors including customer concentration in data centers and the risk that customers develop their own fully in-house design solutions. It's a measured acknowledgment of the dynamic Broadcom faces too, but at lower revenue scale Marvell has less cushion for any single customer loss.

Why Marvell still matters: in a market where only two companies enable 80%+ of hyperscaler custom AI silicon, being the #2 is a valuable position. Hyperscalers want dual-source design relationships for risk-mitigation reasons — the same reason they want dual-source manufacturing and dual-source HBM. That structural incentive keeps Marvell in the game even as Broadcom's dominance increases.

Broadcom vs. Marvell: How the Two Design Partners Compare

The two companies sit in the same category but the distinctions are sharper than the "design enablers" label suggests. Broadcom is anchored by hyperscalers leading the ASIC race; Marvell is anchored by hyperscalers chasing the leader. Broadcom has multi-year supply commitments locked in; Marvell does not. The customer rosters share zero overlap — by the structural logic of dual-sourcing, hyperscalers picked one partner per program and stuck with it.

| Broadcom | Marvell | |

| Design services market share | ~70% | 20–25% |

| FY2025 total revenue | $63.9B | ~$7B |

| Hyperscaler customers | Google, Meta, OpenAI, Anthropic, Apple | AWS, Microsoft |

| Customer profile | Anchored by ASIC race leader (Google TPU) | Anchored by hyperscalers in catch-up mode |

| Long-term supply commitment | Google through 2031 | None publicly disclosed |

| Strategic posture | Dominant platform | Structural #2 / dual-source counterweight |

The takeaway from the comparison is that "design enabler" is a category, not a homogeneous business. Broadcom is the $63.9 billion-revenue dominant platform with multi-year hyperscaler lock-in. Marvell is the structural #2 — strategically valuable to hyperscalers as a dual-source counterweight, but operating without the same long-term commitments or customer breadth. Both benefit from the migration of AI silicon away from NVIDIA's vertically integrated model, but they benefit at very different magnitudes.

What the Design Partner Duopoly Means for the AI Chip Market

The Broadcom and Marvell duopoly reframes how to think about AI compute capacity. Most coverage treats hyperscaler ASICs as evidence that hyperscalers are reducing their dependence on NVIDIA. That framing is incomplete. Hyperscalers are reducing dependence on NVIDIA — but the dependence is migrating to two design partners, not dissolving into competitive plurality.

The economics of the migration favor Broadcom and Marvell more than they favor the hyperscalers themselves. Hyperscalers absorb capex, manufacturing risk, and validation timelines. Design partners capture the IP and licensing economics with capital-light gross margins. When Broadcom's gross margins exceed NVIDIA's while spending a fraction of the capex, the design partner model isn't a fallback — it's a better business than the chip vendor business it's helping displace.

What This Means for Bitcoin Miners and Infrastructure Investors

For Bitcoin miners and infrastructure investors evaluating AI exposure, the structural takeaway is that the highest-margin, lowest-capital-intensity economics in the AI compute stack don't sit with NVIDIA or the hyperscalers — they sit with the design partners enabling the migration. NVIDIA remains the dominant GPU vendor and the primary buy-side option for compute capacity. But the long-term wealth-creation dynamic in AI silicon increasingly looks like a Broadcom-and-Marvell story playing out underneath the hyperscaler headlines.

For infrastructure operators considering AI/HPC pivots, the design enabler dynamic also shifts how to think about chip availability. Hyperscaler ASICs aren't just about Google or Microsoft chip strategy — they're about the design partner's capacity to scale across multiple hyperscaler customers simultaneously. Bottlenecks at Broadcom or Marvell affect every program they enable.

Looking Ahead to Part 3

Broadcom and Marvell are one half of the independent AI chip universe — companies that enable the chips hyperscalers build for themselves. The other half looks completely different: standalone companies designing, manufacturing, and selling their own AI silicon in direct competition with NVIDIA. In Part 3, we cover the three highest-profile names in that group — Groq, Cerebras, and Etched — and the very different theses each one represents.

Hashrate Index Newsletter

Join the newsletter to receive the latest updates in your inbox.