Token Factories, GPU Second Life, Energy Demand Growth: 6 Takeaways from GTC 2026

We attended GTC 2026. Here are the 6 takeaways that matter for infrastructure operators and capital allocators.

NVIDIA's GTC 2026 drew over 30,000 attendees to San Jose for a week of keynotes, panels, and product announcements mapping where AI infrastructure is headed.

We attended sessions spanning capital markets, energy, enterprise AI, and hardware architecture. Most coverage elsewhere focuses on chips and models. Here's what matters for the people who build, finance, and operate physical infrastructure.

1. Tokens Are the New Commodity

Jensen Huang's keynote reframed the entire AI infrastructure thesis in five words: "Compute is your revenue now."

The argument is straightforward. Data centers are no longer storage facilities. Data centers are now factories. The product is tokens, the workload is inference, and the efficiency metric that determines profitability is tokens per watt.

Jensen was blunt about architecture risk: "If you have the wrong architecture, even if it's free, it's not cheap enough."

NVIDIA doubled its cumulative demand guidance from $500B (announced at GTC 2025) to over $1T through 2027. The revenue split: 60% from top hyperscalers, 40% from neoclouds, sovereign AI, industrial, and enterprise (a long tail that's growing fast).

For mining operators who think in J/TH and $/MWh, the framing translates directly: tokens per watt is the hashprice of AI factories, but the same optimization problem. The question is whether you're building the factories, supplying them with power and sites, or both.

2. Vera Rubin: A System, Not a Chip

The most important hardware detail from GTC isn't a spec number—it's a design philosophy. Vera Rubin is not a GPU announcement. It's a complete, vertically integrated supercomputer: 7 chips, 5 rack-scale computers, one unified platform designed end-to-end for agentic AI.

The highlights that matter for deployment economics: 100% liquid cooling is now required, not optional. The system runs on 45°C hot water, offloading cooling costs from the facility. Installation time dropped from 2 days to 2 hours — a shift that compresses deployment timelines and reduces commissioning labor. Rubin Ultra, housed in the new Kyber rack, pushes to 144 GPUs in a single NVLink domain.

We published a full specs breakdown of the Vera Rubin NVL72 platform for the detailed hardware analysis.

3. The Infrastructure Capital Supercycle

The numbers at GTC's capital markets panel were staggering. Will Brilliant of Global Infrastructure Partners (now part of BlackRock) described gigawatt-scale campuses costing $20–25B for shell and power, another $25B+ for compute. Overall capacity may need to triple. The total spend runs into the trillions, and it’s debt-financed, long-duration, utilization-dependent infrastructure capital.

But the bottlenecks aren't where most people assume. CoreWeave co-founder Brian Venturo was emphatic: skilled labor is the most acute constraint, not power. A single gigawatt campus can require 9,000+ workers from 40+ states. Interconnection queues are clogged with non-viable projects, making power scarcity appear worse than it is. And rapidly changing tech stacks cause cost overruns and mid-build redesigns.

Mira Murati, CEO of Thinking Machines Lab, put it plainly: "Dollars aren't the scarcest input; strategy, timing, and the right infrastructure fit are."

For mining operators, the capital supercycle creates demand for sites, power, and operational expertise (skills the mining industry has in depth). But participating means meeting infrastructure capital's expectations: long-term contracts, high utilization, and execution excellence.

4. Older GPUs Get a Second Life

The most directly relevant statement for the GPU hardware market came from Michael Intrator, CoreWeave's CEO. His argument: as inference workloads are disaggregated—prefill separated from decode, model layers distributed across different hardware—it becomes possible to route specific tasks to specific GPUs. Older hardware handles the workloads it's suited for, and leading-edge GPUs focus on leading-edge tasks.

The implication: the 4–5 year useful life traditionally assumed for data center GPUs may be more like 8–10 years.

Gavin Baker of Atriedes Management reinforced the point, emphasizing that extracting more value from existing infrastructure benefits lenders, operators, and the broader ecosystem. Intrator was direct: "We need to milk all the value we can to invest in the next wave."

For operators evaluating used hardware purchases or sitting on existing GPU inventory, this is a pricing signal. If inference disaggregation extends useful lives, H100s — and eventually Blackwell-era GPUs — retain value longer than current depreciation models assume. It also reinforces the mullet mining thesis: older hardware generates inference revenue while newer hardware handles demanding training workloads.

The caveat is real: the 8–10 year thesis depends on inference disaggregation maturing at scale and software stacks reliably supporting heterogeneous hardware. The direction is right. The timeline isn't guaranteed.

5. Energy: The Binding Constraint and the Biggest Opportunity

The energy panels at GTC painted a picture of demand growth that's difficult to overstate. Venkat Tirupati, ERCOT's CTO, shared numbers that should stop anyone in infrastructure: 230 GW of large loads in the interconnection queue. Roughly 70% (about 160 GW) are data centers. The current system peak is 85 GW.

Tirupati called it a "timing problem." Loads connect in 6–18 months. Generation takes 1–2 years. Transmission takes 3–6 years. The gap isn't about total capacity. It's about sequencing.

ERCOT's solution is flexibility. A data center requesting 500 MW can structure it as 100 MW firm with 400 MW flexible. It can curtail during grid stress in exchange for demand-response compensation. This lets large loads interconnect faster while contributing to grid stability.

Southwest Power Pool told a similar story. Historical load growth ran under 1% annually; last year it exceeded 4% and is compounding. Planning studies that took up to 27 months were the bottleneck. SPP secured approval for a consolidated process with 90-day turnaround and is partnering with NVIDIA on AI-accelerated simulations targeting 80%+ runtime reductions.

Chris Dolan of Crusoe Energy described a bridge strategy mining operators will recognize: bring your own power. On-site generation using gas turbines fills the gap until grid interconnection arrives. AI factories, he argued, can evolve from grid liabilities to flexible grid assets that adapt to power availability.

For miners, this is familiar territory. Power procurement, curtailment economics, grid relationships, and site selection are core mining competencies. The demand-response model being built for AI data centers is structurally similar to how Bitcoin miners already interact with the grid.

6. Agentic AI Is Rewriting Infrastructure Requirements

One theme cut across nearly every session at GTC: the workload is changing, and it's changing what infrastructure needs to look like.

Sachin Katti, Head of Industrial Compute at OpenAI, described the progression clearly. Chatbots required small prompts and single-shot inference. Reasoning models added internal context across turns. Agents (the current frontier) demand vastly larger working sets, branching exploration, intensive tool use, persistent state, and sub-agent orchestration. The success metric shifts from response quality to task completion speed.

The infrastructure implications are significant. Agentic workloads need disaggregated inference across nodes, heterogeneous hardware mixing GPUs with CPUs and specialized accelerators, multi-tier storage, and networking that supports scale-up, scale-across, and scale-out simultaneously—a fundamentally different build profile than a training cluster.

In the keynote, Jensen announced NemoClaw—NVIDIA's enterprise agent deployment platform with security sandboxes, privacy protections, and policy engines. His prediction: every SaaS company becomes an “AgaaS” (Agent-as-a-Service) company. Whether or not the timeline is that aggressive, the direction is clear, and it means the "right" infrastructure is a moving target. Operators planning builds for the next 3–5 years need to think beyond GPU count to full system design.

What GTC 2026 Means for Operators on the Mining Side

GTC 2026 confirmed that the AI infrastructure buildout is a present-tense capital cycle. It’s measured in trillions, constrained by labor and energy, and evolving faster than most planning horizons accommodate.

For mining operators, the transferable skills are real. Power procurement, grid flexibility, site operations, cooling expertise, and hardware lifecycle management are exactly what this buildout demands. The CoreWeave thesis on GPU lifespans suggests the used hardware market has more runway than depreciation models assume. And the energy bottleneck is an opportunity for operators who already navigate grid economics.

The window is open, the demand is real, and the skills mining operators already have are more valuable in this market than most of them think.

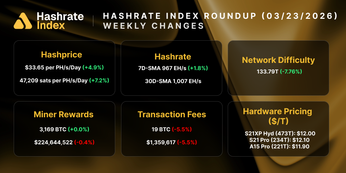

Hashrate Index Newsletter

Join the newsletter to receive the latest updates in your inbox.