Evaluating Bitcoin Mining Sites for AI Training & AI Inference

Your mining site's characteristics determine whether it's better suited for AI training, inference, both, or neither. This five-question evaluation framework helps you figure out which workload fits your assets before procurement conversations start.

GPUaaS for Bitcoin Miners: How to Evaluate the Opportunity at Your Site

GPU-as-a-Service demand is real—but not every mining site qualifies. Here’s how to evaluate location, power reliability, cooling, and fiber for training vs inference.

Neoclouds Explained: The GPU Cloud Providers Powering AI

Roughly one-third of AI workloads run on neoclouds, not hyperscalers. Here's what neoclouds are, who the major providers are, and why Bitcoin miners should pay attention.

AI Inference vs Training: What's the Difference and Why It Matters

Every AI workload is either training or inference. The distinction drives infrastructure decisions—what hardware you need, where you locate it, and how the economics work.

GPU-as-a-Service: The Business Model Behind AI

GPU-as-a-Service connects AI compute demand with infrastructure supply. Here's how the business model works—from pricing models to what it takes to become a provider.

From ASICs to GPUs: Why the Transition from Mining to AI Is Harder Than You Think

Bitcoin miners are eyeing AI/HPC as the next frontier, but GPU infrastructure isn't mining with different hardware. See what the transition actually requires—and where mining experience helps or hurts.

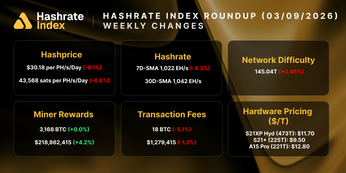

Global Hashrate Heatmap Update: Q1 2026

The latest update to Hashrate Index’s Global Hashrate Heatmap.